User reviews reveal the why behind the data. They highlight what users love, what frustrates them, and what ultimately drives their decisions. At scale, reviews become one of the most valuable and hardest datasets to collect. Whether it’s an app or an e-commerce marketplace, reviews act as a direct line to customer sentiment.

As businesses grow and expand across platforms, the volume of reviews increases rapidly. Manually collecting and analyzing this data is not only time-consuming but also inefficient. To truly unlock the value of user feedback, companies need a way to gather and process reviews at scale.

What is App Store & Marketplace Review Scraping?

App store and marketplace review scraping is the process of automatically collecting user feedback from various platforms at scale. Instead of manually going through individual reviews, scraping allows you to extract large volumes of data quickly and efficiently.

This includes gathering reviews from popular platforms such as the Apple App Store, Google Play Store, Amazon, and other online marketplaces where users actively share their experiences.

The goal is to turn structured user feedback into structured data that can be analyzed for insights.

Typically, review scraping focuses on collecting key data points such as:

- Ratings (e.g., star ratings)

- Review text (user comments and feedback)

- Timestamps (when the reviews are posted)

- Region or location of the user

- App or product version (especially important for tracking updates and changes)

Why Scraping Reviews Matters

Here’s why scraping reviews matters:

Product Feedback & Bug Detection

Reviews often highlight issues that internal testing may miss. Users quickly point out bugs, crashes, or usability problems in real-world scenarios. By analyzing reviews at scale, teams can identify recurring issues faster and prioritize fixes more effectively.

Sentiment Analysis at Scale

It’s one thing to read a handful of reviews, but it’s another to understand sentiment across thousands of users. Scraping enables large-scale sentiment analysis, helping you identify patterns in how users feel about your product.

Competitive Intelligence

By analyzing competitor reviews, you can uncover gaps in their offerings, understand what users like or dislike, and position your product more strategically in the market.

Market-Specific Insights

User behavior and expectations can vary significantly across regions. What works well in one market may not resonate in another. By analyzing reviews based on geography, language, or demographics, businesses can tailor their strategies to better serve different audiences.

Why Review Scraping Is Harder Than It Looks

When you try this at scale, several challenges may emerge:

Geo-Segmented Data

Reviews are often segmented by region, meaning users in different countries may see different sets of reviews. To get a complete picture, you need to collect data across multiple geographies, which adds complexity to the scraping process.

Platform-Specific Storefronts

Each platform has its own structure, layout, and data delivery methods. There’s no common approach, so scraping logic needs to be customized for each platform.

Token-Based Pagination

Many platforms don’t use simple page numbers to load reviews. Instead, they rely on dynamic tokens or cursors to fetch the next set of data. Handling this type of pagination requires more advanced logic to ensure you’re collecting reviews consistently without missing or duplicating data.

High Update Frequency

Reviews are constantly being added, updated, or removed. This means scraping isn’t a one-time task; it needs to be continuous. Keeping your dataset fresh and up to date requires frequent data collection and efficient processing.

The Challenges of Geo-Restricted Review Data

Reviews are often tailored based on location, which means the dataset you collect can vary significantly depending on where the request is coming from.

Reviews Differ by Country

App stores and marketplaces frequently display reviews based on the user’s region. This means a user in India might see a completely different set of reviews compared to someone in the US or Europe. To capture a complete dataset, you need to account for these regional differences.

Ratings Vary Across Regions

User expectations and experiences can differ by geography, which directly impacts ratings. A product might have strong ratings in one country but receive lower ratings in another due to cultural preferences, performance issues, or localized competition.

Platform-Level Validation

Platforms actively verify where requests are coming from before serving review data. This validation typically happens through:

- URL parameters (such as country or locale settings)

- IP location (ensuring the request originates from the expected region)

Because of this, simply changing a URL parameter isn’t always enough. The platform may still restrict or alter the data based on your IP location.

To truly scrape reviews at scale, you need to align both your request parameters and your geographic origin; otherwise, you risk collecting incomplete or misleading data.

Enabling Geo-Accurate Review Collection

Handling geo-restricted data often requires more than just adjusting request parameters; it also depends on where your requests are coming from. To collect data, region-specific reviews, your infrastructure needs to mimic real user locations.

Infrastructure providers like Decodo enable geo-targeted scraping by routing requests through country-level residential IPs, ensuring platforms return accurate local review data.

This makes it possible to access region-specific reviews reliably, without running into mismatches between requested locations and actual IP origins.

Handling Pagination & High-Volume Requests

When scraping reviews at scale, one of the biggest technical challenges is handling how data is loaded and retrieved. Unlike simple websites with numbered pages, most app stores and marketplaces use more complex systems to serve large volumes of reviews.

Token-Based Pagination

Instead of traditional page numbers, many platforms rely on tokens or cursors to load the next set of reviews. Each request returns a token that is required to fetch the following batch of data. This makes the process dynamic, but also more complex to manage.

Sequential Dependency

With token-based systems, requests often depend on the previous response. You can’t skip ahead or request multiple pages independently; you need to follow the sequence step by step. This creates a dependency chain, where each request must be completed before the next one begins.

On a smaller scale, this might not seem like a big issue. But when you’re dealing with thousands or millions of reviews, these dependencies can slow down data collection significantly.

Scaling Strategy

When it comes to scraping reviews at scale, how you distribute your requests matters just as much as how many you send. A common mistake is trying to speed things up by increasing concurrency on a single target.

The Wrong Approach

1 app x 50 threads

Running multiple threads on a single app or product might seem efficient, but it often leads to rate limits, blocked requests, or incomplete data. Since many platforms rely on sequential pagination, aggressive parallel requests can break the flow or trigger anti-bot mechanisms.

The Right Approach

50 apps x 1 thread

A more effective strategy is to distribute your workload across multiple apps or products, with fewer threads per target. This reduces the risk of detection, maintains data consistency, and allows you to scale horizontally instead of overwhelming a single source.

By spreading requests intelligently, you can collect large volumes of data while keeping your scraping process stable and efficient.

Strategies for Real-Time Review Monitoring

Here are some key strategies to make that possible:

Incremental Scraping

Instead of collecting all reviews repeatedly, incremental scraping focuses only on new data.

- Sort reviews by “Newest” to prioritize recent entries

- Stop the process once duplicate or previously collected reviews are detected

This approach reduces unnecessary requests and keeps your data pipeline efficient.

Smart Refresh Cycles

Not all scraping needs to happen at the same frequency. A balanced approach works best:

- Frequent light scrapes to capture newly added reviews

- Periodic deep scrapes to ensure completeness and catch missed data

This helps maintain both speed and accuracy without overloading your system.

Deduplication Pipelines

As data volume grows, avoiding duplicates becomes critical.

- Track unique identifiers such as review IDs or timestamps

- Filter out already ingested data before storing new entries

A strong deduplication system ensures your dataset stays clean, reliable, and ready for analysis.

Technical Considerations by Platforms

| Platform | Data Access Methods | Key Characteristics |

| Apple Play Store | RSS feeds (~500 reviews), internal APIs, Dynamic HTML | Easier entry points with RSS feeds for recent reviews, but limited volume. Deeper data requires working with internal APIs or parsing dynamic content. |

| Google Play | POST requests, Protobuf responses | Uses structured but less transparent data formats. Responses are often encoded (Protobuf), making them harder to decode and reverse-engineer compared to traditional JSON APIs. |

Core Infrastructure for Scaling Review Scraping

Here are some core components to consider:

Geo-Targeted Proxies

Accurate review data depends on matching your request origin with the storefront region. Using geo-targeted proxies ensures that platforms return the correct, location-specific reviews instead of generic or restricted datasets.

Anti-Detection Systems

Platforms actively monitor for non-human behavior, especially at scale. To maintain consistent access, scraping systems need built-in safeguards such as:

- User-agent rotation to mimic different devices and browsers

- Fingerprinting management to avoid identifiable patterns

- Rate limiting to prevent triggering platform restrictions

Distributed Scraping Architecture

Scaling isn’t just about sending more requests; it’s all about structuring them efficiently.

- Parallel jobs allow multiple scraping tasks to run simultaneously

- Queue-based pipelines help manage and distribute workloads

- Multi-region execution ensures better coverage and resilience

Robust Parsing Systems

Even after collecting data, extracting it reliably is another challenge.

- Fallback selectors help handle minor changes in page structure

- HTML change handling ensures your scraper adapts to platform updates.

Note: At scale, infrastructure layers like Decodo combine proxy networks, anti-bot bypassing, and request routing. This reduces the complexity of managing scraping reliability manually.

Top Tools for Scraping App Store & Marketplace Reviews

Here’s a quick overview:

| Category | Tools |

| Frameworks | Scrapy, Playwright |

| APIs | SerpAPI |

| No-code | Outscraper |

| Infra + API hybrid | Decodo |

1. Decodo(Scraping API + Proxy Infrastructure)

Decodo takes a more integrated approach by combining both scraping capabilities and infrastructure into a single solution. Instead of managing multiple layers separately, it brings everything together to simplify large-scale data collection.

- Combines proxies, anti-bot handling, and JavaScript handling

- Reduces infrastructure overhead and operational complexity

- Designed specifically for scalability and reliability

Unlike traditional tools, Decodo abstracts both scraping logic and infrastructure into a single layer. This makes it easier for teams to focus on data extraction and analysis rather than managing backend systems.

2. Scrapy

Scrapy is one of the most popular frameworks for large-scale web scraping. It’s designed for building fast, scalable crawlers and gives developers complete control over how data is collected and processed.

- Scalable crawling framework built for performance

- Offers full control over scraping logic and workflows

- Requires engineering effort to set up and maintain

It’s a strong choice for teams that want flexibility and are comfortable handling infrastructure customization on their own.

3. Playwright/Puppeteer

Playwright and Puppeteer are browser automation tools that allow you to interact with websites just like a real user. They are especially useful for platforms where content is heavily dependent on JavaScript.

- Browser automation that simulates real user behavior

- Effectively handles JavaScript-heavy platforms and dynamic content

- Higher resource usage compared to lightweight scraping frameworks

These tools are ideal when traditional HTTP-based scraping doesn’t work, but they do require more computational power and careful scaling strategies.

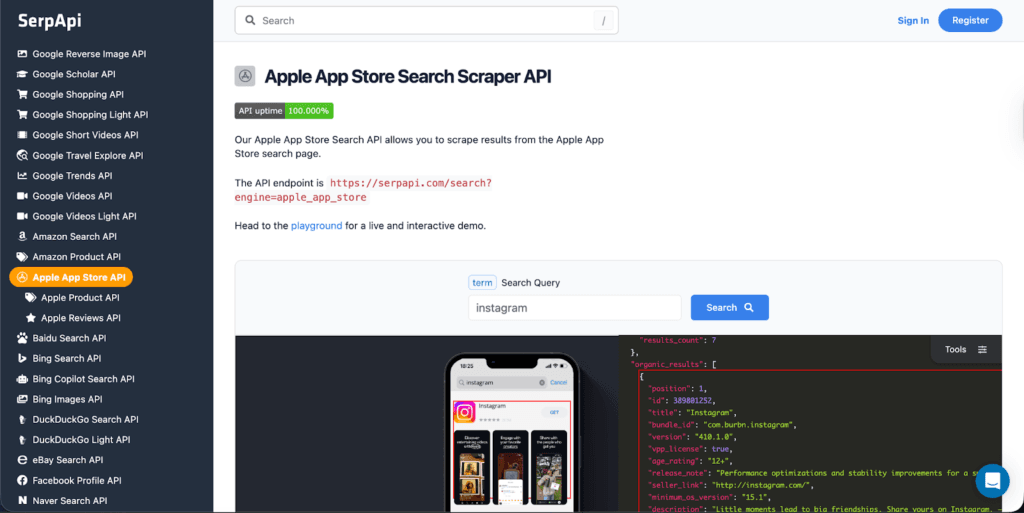

4. SerpAPI

SerpAPI is an AI-based solution that handles the complexity of scraping for you. Instead of building and maintaining your own scrapers, you can fetch structured review data directly through their API.

- API-based scraping with minimal setup required

- Returns structured outputs, making it easy to integrate into workflows

- Reduces the need for handling infrastructure, parsing, and anti-bot systems

It’s a good option for teams that want quick access to data without investing heavily in engineering resources.

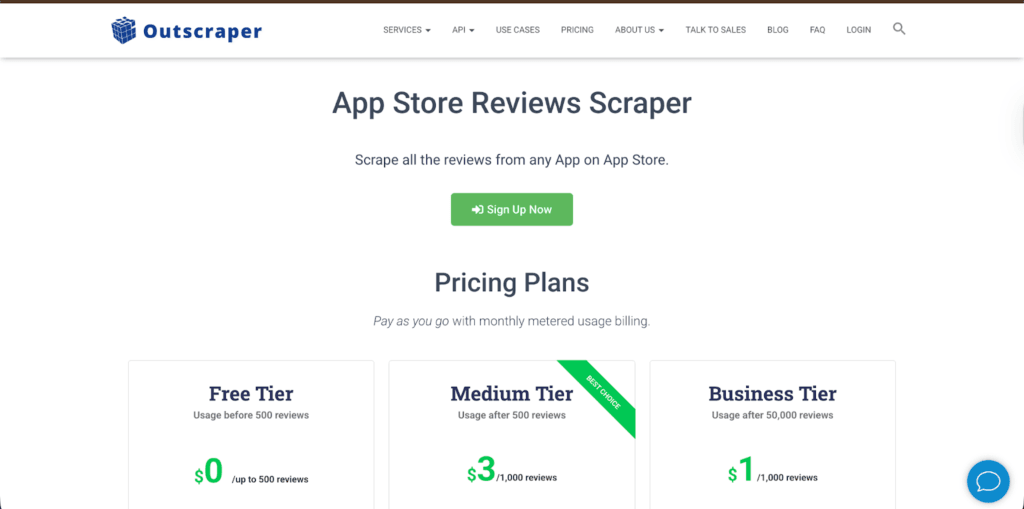

5. Outscraper

Outscraper is a no-code scraping solution designed for users who want to extract data without writing scripts or managing infrastructure. It simplifies the entire process by handling scraping in the cloud.

- No-code solution with a simple, intuitive interface

- Quick setup for users to start extracting data in minutes

- Suitable for non-technical users and small teams

Outscraper allows users to extract review data based on filters like rating, data, language, and app version, making it easier to gather relevant insights without a complex setup.

Because it runs on cloud infrastructure, users don’t need to worry about proxies, scaling, or system maintenance, making it a convenient entry point into review scraping.

6. BeautifulSoup

BeautifulSoup is a Python library used for parsing HTML and extracting data from web pages. While it’s widely used in scraping workflows, it’s important to note that it’s not a complete scraping solution on its own.

- Lightweight parsing library for HTML and XML

- Helps extract specific elements like review text, ratings, or timestamps

- Not a full scraping solution; requires additional tools for requests, scaling, and automation

BeautifulSoup is often used alongside frameworks or custom scripts to handle the data extraction layer after the content has been fetched.

Common Mistakes to Avoid

Avoiding these common pitfalls can save time, resources, and unnecessary rework.

| Mistake | Why It’s a Problem |

| Ignoring Geo Differences | Leads to incomplete or misleading datasets, as reviews vary by country and region |

| Scraping with the Wrong IP Region | A mismatch between request parameters and IP location can result in incorrect or restricted data |

| Overloading a Single Endpoint | Triggers rate limits or blocks, disrupting data collection and reducing efficiency |

| No Incremental Strategy | Causes repeated scraping of the same data, wasting resources and slowing down pipelines |

| No Deduplication | Results in duplicate entries, cluttering datasets and affecting analysis accuracy |

As platforms tighten geo-restrictions and strengthen anti-bot systems, infrastructure-first solutions like Decodo will play a critical role in enabling reliable, large-scale review data collection.

Reviews aren’t just feedback, but a real-time product roadmap. To unlock their full value at scale, three things become critical:

- Geo Accuracy Matters: Without region-specific data, insights can be incomplete or misleading

- Infrastructure Matters: Reliable systems ensure consistent and scalable data collection

- Strategy Matters: Smart scraping approaches make the difference between noise and actionable insights

When these elements come together, review data transforms from scattered opinions into structured, decision-driving intelligence.

Explore our other blogs on web scraping to learn how to collect, scale, and turn data into actionable insights:

- How to Scrape Websites Without Getting Blocked

- Avoid IP Blocking in Web Scraping with IP Rotation

- Web Scraping at Scale with Smart, Multi-Region Infrastructure

FAQs

Start with a scraper that collects reviews using pagination, add a proxy layer for geo-targeting, store the data in a database, and connect it to a processing layer for cleaning and analysis. At scale, this typically evolves into a queue-based, distributed system.

A typical setup includes input sources (app IDs), a scraping layer (requests + parsing), a proxy/infra layer, a queue or scheduler, storage (database or warehouse), and a processing layer for analytics and insights.

Stick to one IP when handling sequential pagination for a single app to maintain consistency. Rotate IPs when switching between apps, regions, or when you start hitting rate limits or blocks.

Instead of parallelizing requests for the same app, distribute your workload across multiple apps or products. Run one thread per app and scale horizontally to avoid breaking pagination flow or triggering detection.

Once you move beyond small-scale scripts and start dealing with geo-restrictions, anti-bot systems, and high-request volumes, dedicated infrastructure becomes essential for maintaining reliability and scalability.

Disclosure – This post contains some sponsored links and some affiliate links, and we may earn a commission when you click on the links at no additional cost to you.