Job postings are one of the clearest signals of where the market is moving in real time. Every new listing tells a story about what companies need, which roles are in demand, and how industries are evolving.

By analyzing job data, you can uncover valuable insights such as hiring demands, salary shifts, and skill trends. This kind of data acts as real-time market intelligence that can guide smarter decisions. But collecting that data at scale? That’s where things fall apart.

What is Job Board Scraping?

Job board scraping is the process of automatically extracting job listing data from online sources at scale. These sources typically include:

- Job boards (like aggregators and listing platforms)

- Company career pages

- Niche or industry-specific platforms

The data collected usually includes key details such as:

- Job title

- Company name

- Salary

- Required skills

- Location

Why Businesses Scrape Job Boards

Job board scraping is about turning raw data into actionable insights that businesses can use to stay ahead.

Talent & Hiring Intelligence

By tracking job postings across industries, companies can understand where hiring is increasing, which roles are in demand, and how competitors are scaling their teams.

Salary Benchmarking

Access to large volumes of job data helps businesses compare salary ranges across roles, locations, and experience levels, ensuring they stay competitive in attracting talent.

Skill Demand Analysis

Job listings reveal the exact skills companies are looking for. This allows organizations to identify emerging trends and align their hiring or training strategies accordingly.

Competitive Hiring Tracking

Monitoring competitor job postings provides insight into their growth plans, new market entries, or shifts in business strategy.

Why Job Board Scraping Breaks at Scale

Here’s where most systems run into trouble:

- IP Bans Mid-Run: Repeated requests from the same IP get flagged, cutting off access unexpectedly

- CAPTCHAs and Bot Protection: Many job sites actively block automated traffic, interrupting your pipeline

- Missing or Inconsistent Data: Not all listings follow the same format, leading to gaps in your dataset

- Frequent Layout Changes: Even small updates to a site’s structure can break your scraping logic overnight

- Incomplete Datasets: Failed requests, timeouts, or blocks result in partial and unreliable data

How Job Boards Detect and Block Scrapers

Here are the most common detection methods:

- IP Reputation: Requests coming from flagged or overused IPs are more likely to get blocked

- Request Velocity: Sending too many requests too quickly is a clear signal of automation

- Behavior Patterns: Repetitive actions without natural pauses or variation raise red flags

- Geo Mismatch: Accessing region-specific job listings from the wrong location can trigger suspicion

- Fingerprinting: Advanced systems track browser and device signatures to identify non-human traffic

Core Best Practices for Large-Scale Job Scraping

Here are the core best practices to keep your pipeline reliable:

Use Geo-Targeted Proxies

Job listings are often location-sensitive, meaning the same search can return completely different results depending on your IP.

If you’re scraping from the wrong region, you’re not just risking blocks; you’re collecting inaccurate data.

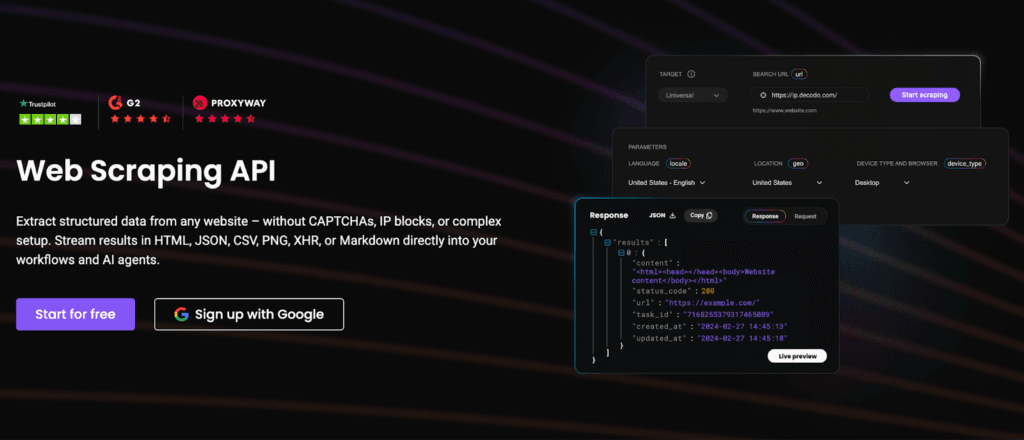

Using providers like Decodo enables geo-targeted scraping, ensuring accurate regional job listings while reducing block rates.

Use Real Browser Automation

Modern job boards are heavily dependent on JavaScript, which means simple HTTP requests often won’t capture the full data.

Tools like Playwright or Puppeteer help simulate real user behavior by rendering pages fully.

This is especially important for:

- JS-heavy websites

- Infinite scroll job listings

- Dynamically loaded content

Mimic Human Behavior

Scraping too fast or too predictably is one of the easiest ways to get blocked. To reduce detection:

- Add delays between requests

- Simulate natural scrolling patterns

- Use random intervals instead of fixed timing

At scale, velocity is one of the biggest ban triggers, so slowing down strategically actually improves long-term reliability.

Rotate IPs & Sessions

As your scraping volume grows, relying on a single IP or session becomes unsustainable. Rotating IPs and sessions helps distribute traffic and avoid detection patterns.

At scale, rotating IPs through solutions like Decodo helps distribute traffic, maintain session stability, and avoid detection across large scraping operations.

Handle Dynamic Content Properly

Not all job data is visible in the initial page load. Many platforms rely on dynamic loading techniques.

To capture complete data, you need to account for:

- JavaScript-rendered elements

- Hidden API endpoints

- Pagination and lazy loading

Ignoring these can lead to incomplete or misleading datasets.

Extract Only Public Data (Compliance)

Scraping comes with legal and ethical responsibilities. Best practices include:

- Extract only publicly available data

- Avoid collecting personal or sensitive information

- Respect the website’s terms of service

Data Pipeline Best Practices

To get real value from job data, you need a pipeline that keeps your data clean, fresh, and reliable over time.

Data Cleaning & Deduplication

Job listings often appear multiple times across platforms or even within the same source. To maintain quality:

- Remove duplicate listings

- Normalize fields like job titles, locations, and salary formats

Freshness & Update Cycles

Job data has a short lifespan. Listings go live, get filled, and disappear quickly.

To stay relevant:

- Refresh data frequently

- Re-scrape high-priority sources at regular intervals

Monitoring & Block Detection

At scale, you can’t rely on manual checks. You need systems that alert you when something breaks. Key metrics to track:

- Missing or incomplete fields

- CAPTCHA frequency

- Sudden drops or spikes in job count

Scaling Job Scraping Infrastructure

As your data needs grow, the process becomes about building systems that can handle complexity efficiently.

Distributed Scraping

Instead of relying on a single machine or process, large-scale scraping is distributed across multiple nodes. This helps balance the load, improve speed, and reduce the risk of failure.

Parallel Pipelines

Running multiple scraping tasks in parallel allows you to collect data faster while covering more sources at the same time. It also ensures that delays or failures in one pipeline don’t affect the entire system.

Smart Scheduling

Not all sources need to be scraped at the same frequency. Scheduling allows you to:

- Prioritize high-value or frequently updated job boards

- Reduce unnecessary requests

- Optimize resource usage

Well-planned schedule keeps your data fresh without overloading your infrastructure.

Scraping vs APIs: When to Use What

The answer depends on your goals. Both approaches have their strengths, and in practice, most systems rely on a combination of the two.

| Approach | Advantages | Limitations |

| APIs | Cleaner, structured dataMore reliable and stableTypically compliant with platform rules | Limited access to dataRate limits and restrictionsNot all platforms provide APIs |

| Scraping | Wider data coverageAccess to platforms without APIsMore flexibility in data extraction | Prone to blocks and CAPTCHAsRequires maintenanceHandling unstructured data can be complex |

APIs are ideal when you need clean, compliant, and stable data pipelines. Scraping, on the other hand, is better suited for expanding coverage and accessing otherwise unavailable data.

Most large-scale systems use a hybrid approach, combining APIs for reliability and scraping for flexibility.

Common Mistakes to Avoid

Here are some common pitfalls:

- Scraping Too Fast: High request velocity is one of the quickest ways to get blocked

- Ignoring Geo-Targeting: Using the wrong location leads to inaccurate or incomplete job data

- No Proxy Rotation: Relying on a single IP increases the risk of bans and interruptions

- Lack of Monitoring: Without tracking failures, issues go unnoticed until data quality drops

- Collecting Unnecessary Data: Extracting more than needed increases load, complexity, and risk.

Avoiding these mistakes can significantly improve the stability and reliability of your pipeline.

The Future of Job Data Collection

Here’s what the future looks like:

AI-Powered Parsing of Job Descriptions

Extracting deeper insights from unstructured text, such as role expectations, seniority levels, and hidden skill requirements.

Real-Time Labor Market Analytics

Moving from static reports to live dashboards that reflect hiring trends as they happen.

Automated Insights

Systems that don’t just collect data, but analyze and surface actionable patterns automatically.

As job platforms become smarter, infrastructure providers like Decodo will play a key role in enabling reliable, large-scale data access without disruption.

At scale, success doesn’t come from scraping faster. It comes from building systems that are reliable, consistent, and compliant.

To get it right, focus on:

- Reliability: Ensure your pipelines run smoothly without frequent breaks

- Scalability: Design systems that can handle growing data needs efficiently

- Compliance: Respect legal and ethical boundaries while collecting data

When these foundations are in place, job data becomes a powerful asset for real-time market intelligence.

Explore our other blogs on web scraping to discover more strategies, tools, and best practices for scaling data effectively:

- How to Scrape Websites Without Getting Blocked

- Avoid IP Blocking in Web Scraping with IP Rotation

- Web Scraping at Scale with Smart, Multi-Region Infrastructure

FAQs

A typical setup includes a request layer (with proxies and rotation), a scraper (like Playwright or Scrapy), a parsing layer to structure the data, a storage system (database or data warehouse), and a monitoring layer to track failures and performance.

Proxy rotation involves cycling through a pool of IPs for each request or session, adding retries, and managing session persistence. At scale, this is usually handled through proxy providers or middleware that automatically rotate IPs and handle failures.

Store raw data in a database or data lake, then process and clean it using pipelines. Structured storage (like SQL or warehouses) helps with querying, while batch or streaming workflows ensure data stays updated and usable.

Scrapy is ideal for fast, large-scale scraping of static sales, while Playwright is better for dynamic, JavaScript-heavy pages. Many systems use a hybrid approach, combining tools based on the complexity of the target websites.

Use APIs when available for cleaner and more reliable data. Switch to scraping when APIs are limited or unavailable. Most large-scale systems combine both APIs for stability and scraping for broader coverage.

Disclosure – This post contains some sponsored links and some affiliate links, and we may earn a commission when you click on the links at no additional cost to you.