Flight prices can change within hours, sometimes even minutes. For businesses working with travel data, this constant fluctuation creates a real challenge. Relying on outdated pricing information doesn’t just lead to minor inaccuracies; it can result in missed opportunities, poor customer experiences, and ultimately lost revenue.

In a space where timing is everything, speed is important. But more than anything, reliability is what truly makes the difference.

What is Travel Price Scraping

Travel pricing scraping is the process of automatically collecting pricing data from travel platforms. This includes everything from flight fares and hotel rates to car rental prices, all gathered directly from websites in real time.

For businesses, this data is incredibly valuable. It helps compare prices, monitor competitors, and make smarter pricing decisions. Instead of manually checking multiple platforms, scraping allows you to pull all that information together quickly and efficiently.

But here’s where it gets tricky. Unlike most industries, travel data is both dynamic and fragmented. Prices change frequently based on demand, availability, location, and even before behavior. At the same time, this data is spread across multiple platforms like airlines, aggregators, hotel chains, and rental services, each with its own structure and restrictions.

This combination makes travel price scraping far more complex than it first appears. It’s not just about collecting data; it’s about collecting the right data, consistently and reliably.

Why Reliability Is the Real Challenge

Travel data and pricing are constantly in motion. What you see at one moment may already be outdated the next.

Availability adds another layer of complexity. A hotel room or flight seat might appear available during one request and disappear in the next, making consistency difficult to maintain.

Then there’s geo-based pricing. The same flight or hotel can show different prices depending on the user’s location, device, or browsing behavior. Without the right setup, you’re not capturing the full picture, but only seeing a version of it.

On top of that, travel platforms frequently update their UI and APIs. Small structural changes can break scraping pipelines, leading to incomplete or incorrect data without immediate notice.

Getting accurate, consistent, real-time data is not easy. That’s exactly where reliability becomes the biggest challenge.

Key Data Points in Travel Price Scraping

To get a complete and reliable picture, you need to focus on a few critical elements:

- Flight Fares: The base ticket price can change rapidly based on demand and timing.

- Hotel Pricing: Room rates that vary by date, occupancy, and booking platform.

- Availability: Whether a flight seat, hotel room, or rental options are actually bookable at that moment.

- Taxes & Hidden Costs: Additional fees that often appear at checkout and can significantly impact the final price.

- Reviews & Ratings: While not pricing data directly, they heavily influence user decisions and perceived value.

Core Use Cases

Here are some of the most strategic ways travel price scraping is used:

Competitive Price Monitoring

Keeping track of competitor pricing in real time allows businesses to stay relevant. With continuous monitoring, pricing strategies can be updated instantly to stay competitive.

Dynamic Pricing Systems

Instead of setting static prices, businesses can use scraped data to automate pricing decisions. By reacting to market changes, demand, and competitor rates, dynamic pricing systems ensure prices remain optimized at all times.

Demand Forecasting

Historical and real-time data together help identify patterns like peak seasons, high-demand routes, and booking behaviors. This makes it easier to anticipate demand and plan pricing or inventory accordingly.

Aggregators & Comparison Platforms

Platforms that compare flights, hotels, or rentals rely heavily on real-time data. Accurate scraping ensures users see up-to-date prices across multiple providers, making comparisons reliable and useful.

What Breaks Travel Scraping Systems

There are several common challenges behind this:

- Rate Limits: Travel platforms restrict how frequently you can send requests, making high-volume scraping difficult to sustain.

- IP Bans: Repeated requests from the same source can quickly get blocked, cutting off access entirely.

- Geo-Restrictions: Pricing and availability often vary by location, so without proper geo-targeting, the data becomes incomplete or misleading.

- Dynamic Content (JS-Heavy Sites): Many travel platforms rely on JavaScript to load data, which standard scrapers can’t easily capture.

- Inconsistent Data Formats: Different platforms structure their data differently, making it harder to standardize and compare.

Building a Reliable Travel Scraping System

Reliability comes from how well each part of their pipeline is designed:

Real-Time Data Collection

Relying only on scheduled scraping isn’t enough in a fast-moving industry like travel. Prices can change between intervals, leading to outdated data. A more reliable approach combines scheduled jobs with event-based scraping, ensuring data stays fresh when it matters most.

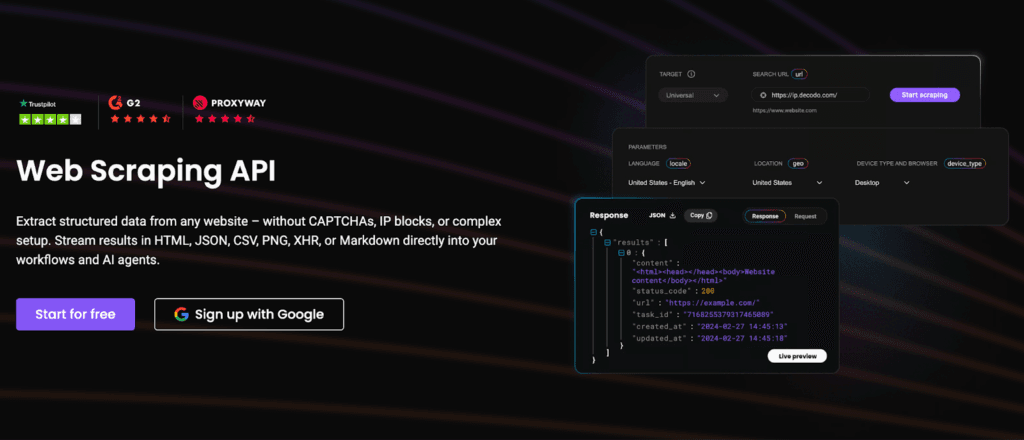

Handling Dynamic Content

Modern travel platforms are heavily dependent on JavaScript, which means traditional scraping methods often fall short. Using headless browsers allows you to render pages fully, while an API-first approach can simplify data extraction and improve reliability.

Proxy Infrastructure

Reliable scraping at scale depends heavily on proxy infrastructure. Solutions like Decodo help distribute requests across regions, making it possible to access geo-specific pricing without triggering blocks. This not only improves access but also ensures the data reflects real user conditions.

Geo-Targeting for Accurate Pricing

In travel, the same hotel or flight doesn’t have the same price everywhere. Pricing can vary based on location, currency, and user profile. Geo-targeting allows you to simulate requests from different regions, giving you a more accurate and complete view of pricing.

Data Validation & Cleaning

Even with the right setup, raw data isn’t always reliable. Duplicate entries, missing fields, or sudden anomalies can distort insights. Cleaning and validating data by removing duplicates and flagging inconsistencies is essential for maintaining accuracy.

The Role of Monitoring in Price Accuracy

A few key metrics make all the difference:

- Success Rate: Are your requests actually returning valid data, or silently failing?

- Missing Fields: Are critical data points like price, availability, or taxes consistently captured?

- Price Anomalies: Are there sudden spikes, drops, or inconsistencies that don’t match real-world trends?

Tracking these signals helps you catch issues early, before they impact decisions or user experience.

Platforms like Decodo also support consistent request delivery, making monitoring systems more reliable by reducing data gaps caused by blocked or failed requests.

Best Practices for Reliable Travel Price Scraping

Here are some of the key best practices to keep in mind:

- Respect Rate Limits: Sending too many requests too quickly can trigger blocks. Spacing out requests helps maintain access and reduce the risk of getting flagged.

- Rotate IPs: Using different IP addresses distributes requests and prevents overloading a single source, improving long-term reliability.

- Randomize Requests: Mimicking natural user behaviors like varying request intervals and patterns makes scraping less predictable and harder to detect.

- Monitor Continuously: Keep an eye on success rates, missing data, and anomalies to catch issues early and maintain data quality.

- Adapt to Site Changes: Travel platforms frequently update their structure, so your scraping logic needs to evolve alongside them.

Common Mistakes to Avoid

Here’s what to watch out for:

- Scraping Too Aggressively: Sending too many requests too quickly often leads to rate limits or IP bans, cutting off access.

- Ignoring Geo Differences: Without location-based requests, you’re only capturing partial pricing data, which can be misleading.

- Relying On Static Scripts: Travel platforms change frequently, and scripts that aren’t updated will eventually break.

- No Monitoring in Place: Without tracking performance, failures can go unnoticed until the data is already compromised.

- Using Low-Quality Proxies: Poor proxy infrastructure increases the chances of blocks, inconsistent data, and failed requests.

Future of Travel Price Scraping

Travel pricing is only becoming more dynamic, and the systems behind it are evolving just as quickly. What used to be simple price tracking is now shifting toward smarter, more adaptive models.

One major shift is AI-driven pricing. Travel platforms are increasingly using machine learning to adjust prices in real time based on user demand behavior, and market conditions.

Alongside that, real-time personalization is changing how users see prices. Two users searching for the same flight or hotel may see different results based on location, browsing history, or preferences.

There’s also a growing focus on predictive pricing models. Instead of reacting to changes, businesses are starting to anticipate them, like forecasting demand, identifying trends, and adjusting prices proactively.

As travel data becomes more dynamic, infrastructure providers like Decodo will play a key role in dynamic, scalable, real-time data collection without disruptions.

In travel, the difference between winning and losing often comes down to who has the most accurate data, at the right time.

Speed helps you keep up with constant price changes. Accuracy ensures the data you rely on is actually trustworthy. Adaptability allows your system to evolve as platforms, pricing models, and user behaviors change.

Individually, each of these matters. But together, they define reliability. In a market where every price shift can influence a decision, reliability isn’t just an advantage, but a competitive edge.

Explore our other blogs to learn how proxy infrastructure powers reliable, large-scale data collection:

- Geo-Targeted Web Scraping: Access Data Worldwide with a Huge IP Pool

- Web Scraping at Scale with Smart, Multi-Region Infrastructure

- Avoid IP Blocking in Web Scraping with IP Rotation

FAQs

A typical setup includes a data collection layer (scrapers or headless browsers), a proxy layer for distributing requests, a processing layer for cleaning and structuring data, and a monitoring layer to track performance and reliability.

APIs are more stable and easier to use when available, but they often provide limited data. Scraping offers more flexibility but requires maintenance. A hybrid approach using APIs, where possible and scraping for gaps is often the most reliable strategy.

It depends on your use case. Scrapy works well for large-scale, structured scraping, while tools like Playwright or Puppeteer are better for handling dynamic, JavaScript-heavy websites.

Geo-targeting is typically achieved through proxy networks that allow you to send requests from different locations. This helps capture region-specific pricing and availability accurately.

Accurate, real-time data enables better pricing decisions, more competitive offers, and improved user trust, all of which can directly impact conversion rates and overall revenue.

Disclosure – This post contains some sponsored links and some affiliate links, and we may earn a commission when you click on the links at no additional cost to you.