Property listings don’t just sit online. Pricings change, listings disappear, and new properties show up every single day.

If you’re trying to track this manually, you’re already behind. The reality of real estate data is that it moves fast, and manual tracking simply can’t keep up.

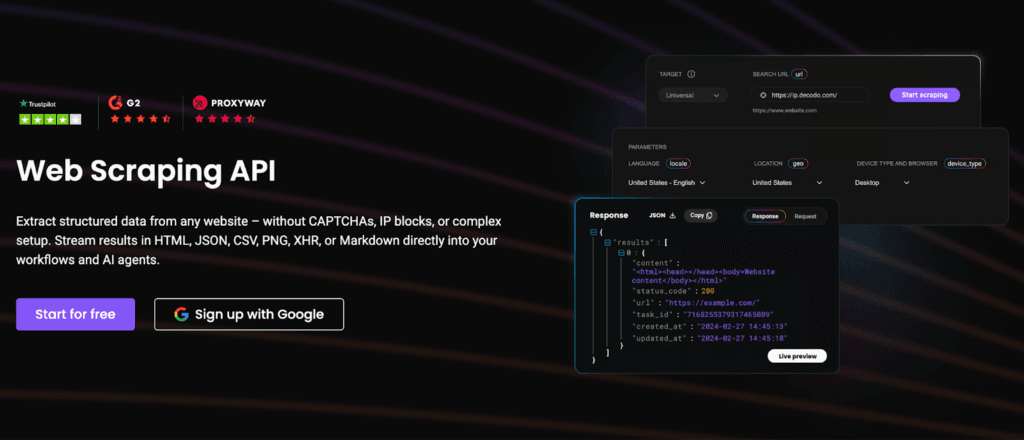

What really matters is having data that’s fresh, complete, and scalable. That’s where efficient data scraping comes in, helping you stay updated in real time and make smarter, faster decisions.

What is Real Estate Listings Scraping

Real estate listings scraping is the process of automatically collecting property data from websites instead of gathering it manually.

This typically includes data like:

- Property price

- Location

- Size and specifications

- Agents or seller information

Why “Efficiency at Scale” is the Real Challenge

Scraping a few listings is easy, but scraping millions consistently is where the system breaks.

Real estate properties are constantly changing. New properties get listed, prices are updated, and older listings disappear sometimes within hours. Now multiply that across multiple websites, cities, and property types, and the volume quickly becomes overwhelming.

You need to constantly refresh data to keep it relevant. At scale, the challenge isn’t just scraping, it’s doing it fast, reliably, and repeatedly without gaps or failures.

Key Data Points to Extract from Listings

Here are the key data points to focus on:

- Pricing: Current price, historical price changes, and discounts

- Property Specs: Bedrooms, bathrooms, square footage, amenities

- Location: Address, locality, city, and sometimes geo-coordinates

- Listing Status: Active, sold, pending, or removed listings

- Agent Information: Agent name, contact details, and agency

What Breaks Real Estate Scraping at Scale

Once you start pulling data across multiple platforms and thousands of listings, things begin to fall apart.

Here’s what typically gets in the way:

- Anti-Bot Systems: Websites use CAPTCHAs, rate limits, and IP blocking to prevent automated access

- Dynamic Content: Listings often load via JavaScript or infinite scroll, making them harder to capture reliably

- Inconsistent Formats: Every platform structures data differently, which makes standardization a challenge

- Duplicate Listings: The same property can appear multiple times across platforms, cluttering your dataset

- Frequent Site Changes: Even small layout updates can break your scraping logic overnight

Building an Efficient Scraping Pipeline

Scraping at scale is about building a pipeline that works reliably end-to-end.

Source Selection & Coverage

Start with the right sources. Major real estate portals give you volume, but regional and niche sites add depth. The more diverse your sources, the better your market visibility.

Better coverage ensures better insights and fewer blind spots.

Structured Extraction Workflows

Efficiency comes from structure. A solid pipeline typically includes:

- Handling pagination to move through listing pages

- Extracting detailed data from individual property pages

- Following a schema-first approach so that all data fits a consistent format

When your extraction is structured, everything downstream becomes easier.

Handling Dynamic Websites

Modern real estate sites aren’t static. To deal with dynamic content, you’ll need:

- Headless browsers to simulate real users

- JavaScript rendering to load hidden data

- API fallbacks where available for faster, cleaner extraction

This ensures you’re not missing data that doesn’t appear in raw HTML.

Proxy Infrastructure

At scale, scraping real estate platforms requires robust proxy infrastructure. Solutions like Decodo help distribute requests, avoid IP bans, and maintain stable access across high-volume listing pages.

Without this layer, your pipeline will struggle to stay consistent.

Data Cleaning and Normalization

Raw listing data is messy, and clean data is valuable. To make your data usable:

- Remove duplicates across platforms

- Standardize formats (price, area, location names)

- Validate fields to ensure accuracy

This is where raw data turns into something you can actually analyze and trust.

Scaling Without Breaking Your Pipeline

As listing data grows across platforms, your pipeline needs to handle more requests, more updates, and more variability all at once.

Here’s what that looks like in practice:

- Distributed Scraping: Instead of relying on a single process, workloads are split across multiple systems to handle large volumes efficiently.

- Parallel Requests: Running multiple requests simultaneously speeds up data collection without slowing down your pipeline.

- Scheduling & Refresh Cycles: Regular scraping intervals ensure your data stays up-to-date as listings change throughout the day.

Your pipeline needs to adapt continuously to keep up. Reliable proxy rotation from providers like Decodo ensures scraping pipelines remain stable even under high request volumes and strict anti-bot systems.

Monitoring & Maintaining Data Quality

At scale, even small issues can compound quickly. That’s why continuous monitoring is critical.

Here’s what to keep an eye on:

- Missing Fields: Are key data points like price or location dropping off? This often signals broken selectors or site changes

- Success Rate: What percentage of requests are actually returning usable data? A drop here usually means blocks or failures

- Data Freshness: How often is your dataset updated? Stale data defeats the purpose of scraping in fast-moving markets

When you actively track these metrics, you’re not just scraping. You start maintaining a system that stays accurate, efficient, and dependable.

Best Practices for Efficient Real Estate Scraping

Here are a few best practices to keep your pipeline stable and reliable:

- Don’t Overload Servers: Space out requests and avoid aggressive scraping that can trigger blocks

- Rotate IPs: Distribute requests to reduce the risk of bans and maintain steady access

- Adapt to Site Changes: Regularly update your scraping logic to handle layout or structure changes

- Validate Data Continuously: Check for missing or incorrect fields to keep your dataset accurate

- Respect Compliance: Follow website terms and data usage guidelines to avoid legal or ethical issues

Common Mistakes to Avoid

As you move from small-scale scraping to larger pipelines, a few common mistakes can quickly derail your efforts:

- Scraping Without a Schema: Collecting data without a defined structure leads to messy, unusable datasets

- Ignoring Duplicates: The same property across multiple platforms can distort your analysis if not handled properly

- No Monitoring: Without tracking performance and data quality, issues go unnoticed until it’s too late

- Over-Aggressive Scraping: Sending too many requests too fast increases the risk of blocks and downtime

- Weak Infrastructure: Without the right setup (proxies, retries, scaling), your pipeline won’t hold up under load

The Future of Real Estate Data Collection

Real estate data collection is becoming smarter, faster, and more automated. Here’s what’s shaping the future:

- AI-Powered Extraction: Smarter systems can now identify and extract data more accurately, even from complex or unstructured pages

- Automated Valuation Models (AVMs): Data isn’t just collected; it is used to estimate property values in real time

- Real-Time Market Intelligence: Instead of static datasets, businesses are moving toward continuously updated insights that reflect live market conditions

As real estate data becomes more dynamic, infrastructure providers like Decodo will be critical for enabling reliable, large-scale data collection without interruptions.

In real estate, those who understand the market fast win, and that starts with data. The real advantage comes from doing it at scale, while keeping it accurate and reliable.

When your pipeline can continuously collect, clean, and update listings across platforms, you’re no longer reacting to the market. You are staying ahead of it. That’s what turns data into a competitive edge.

Explore our other expert guides related to scraping:

- How to Scrape Websites Without Getting Blocked

- Avoid IP Blocking in Web Scraping with IP Rotation

- Web Scraping at Scale with Smart, Multi-Region Infrastructure

FAQs

APIs are faster and more stable, but often limited. Scraping gives you full access but requires more maintenance. A hybrid approach works best in most cases, like using APIs where available and scraping for everything else.

Proxy rotation involves sending requests through different IP addresses to avoid detection. This is usually handled through proxy providers or middleware that automatically rotates IPs based on request volume and response behavior.

It depends on your target websites and volume. As a rule of thumb, higher request rates require more IPs to distribute traffic safely. Start small, monitor block rates, and scale gradually.

Watch for signals like increased failure rates, CAPTCHAs, or empty responses. When detected, reduce request speed, rotate IPs, and adjust headers or scraping patterns to mimic real user behavior.

A good schema is structured, consistent, and complete. It should include fields like price, location, proxy specs, and agent details. All of this is standardized so data from different sources can be easily compared and analyzed.

Disclosure – This post contains some sponsored links and some affiliate links, and we may earn a commission when you click on the links at no additional cost to you.