As businesses increasingly rely on real-time insights, scraping has scaled from pulling a few hundred pages to processing thousands, even millions of requests. And at that scale, even the smallest delays start to add up.

Slow scraping systems don’t just waste time; they limit what your business can achieve.

To understand what really impacts performance, it comes down to two core concepts:

- Latency: how quickly each request gets a response

- Bandwidth: how much data you can process over a period of time

Both play a critical role, but focusing on just one isn’t enough. The most efficient scraping systems are the ones that strike the right balance, optimizing for both speed and scale.

Understanding the Bottlenecks in Web Scraping

At scale, performance issues don’t come from just one place. They’re usually a combination of multiple issues working together.

Network Latency

This is the time it takes for a request to travel from your system to the server and back with a response. On its own, it might seem negligible. But when you’re making thousands of requests, even small delays start to stack up quickly.

Input/Output Bottlenecks

In most scraping workflows, your system spends a lot of time waiting for network responses, APIs, or even for data to be written to disk. This is often the biggest bottleneck in scraping pipelines and can significantly slow down overall performance.

CPU Bottlenecks

Once the data is fetched, it still needs to be processed. Tasks like parsing HTML, extracting relevant information, and transforming data can put pressure on your CPU. While important, this is usually not the primary limiting factor.

Anti-Bot Measures & Rate Limits

Websites today are smarter than ever. Frequent requests can trigger rate limits or anti-bot systems, leading to blocks or delays. This forces scrapers to retry requests, which increases latency and reduces efficiency.

Note: Most web scraping tasks are I/O-bound, not CPU-bound.

This distinction is crucial because it changes how you approach optimization. Instead of focusing only on processing power, the real gains come from improving how efficiently your system handles waiting, requests, and data flow.

The Biggest Mistake: Sequential Scraping

Processing requests one at a time might feel logical. You send a request, wait for the response, process the data, and then move on to the next URL. But at scale, this method quickly becomes inefficient.

Sequential Scraping

- 50 URLs: 50 separate waits

- Each request blocks the next one

- Total time: sum of all individual delays

In this model, your system is constantly waiting, which leads to poor utilization of both time and resources.

Concurrent Scraping

- 50 URLs: processed together

- Multiple requests sent simultaneously

- Waiting time overlaps instead of stacking

By handling multiple requests at once, you significantly reduce idle time and improve overall throughput.

Note: Sequential scraping doesn’t just slow you down. It limits both latency and bandwidth utilization.

Instead of optimizing performance, it creates bottleneck where your system is always waiting, never full utilizing its capacity.

The Core Principle: Concurrency = Speed

Concurrency allows you to:

- Send multiple requests simultaneously

- Maximize your network usage

- Reduce the time your system spends sitting idle

Instead of waiting for one request to finish before starting the next, concurrency overlaps those waiting periods, which is where the real speed gains come from.

Methods to Achieve Concurrency

Here are a few common approaches:

Async/Await (Best for I/O-Bount Tasks)

This is the most efficient and scalable approach for modern scraping systems. Async programming allows your system to handle multiple requests without blocking, making it ideal for network-heavy operations.

Multithreading

A simpler way to achieve concurrency, multithreading works well for tasks that involve a lot of waiting, like network requests. It’s relatively easy to implement and can significantly improve performance.

Multiprocessing

This approach is better suited for CPU-intensive tasks, such as heavy data processing or complex transformation. It uses multiple processes to fully utilize your system’s computing power.

Note: Async + concurrency is one of the biggest performance unlocks in web scraping.

By shifting from waiting-based execution to parallel execution, you can dramatically improve both speed and efficiency without needing additional hardware.

How to Reduce Latency in Web Scraping

Here are some practical ways to do that:

Use Lightweight Requests Instead of Browsers

Headless browsers can be powerful, but they’re also heavy. They load entire web pages, execute JavaScript, and render content. All of this adds unnecessary delay for most scraping tasks.

In many cases, simple HTTP clients are more than enough. By skipping the rendering layer, you can fetch data much faster and with fewer resources.

Optimize Request Size

The more data you request, the longer it takes to transfer.

Blocking non-essential assets like images, fonts, and scripts can significantly reduce response size. A smaller payload means faster responses and lower latency across your pipeline.

Use Compression

Compression methods like gzip and brotli help reduce the size of data being transferred over the network.

This means faster downloads, especially when you’re dealing with large volumes of requests. Most modern servers support compression, so enabling it is a simple but effective optimization.

Choose Faster Infrastructure

Your infrastructure plays a major role in how quickly requests are sent and received.

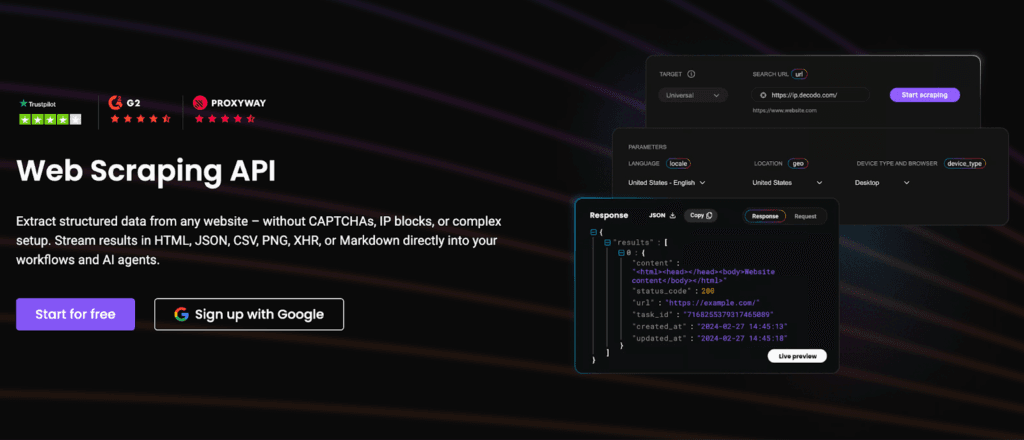

High-quality proxy infrastructure, in particular, can significantly reduce latency. Providers like Decodo optimize routing and connection speed, helping your requests reach target servers faster and more reliably.

How to Achieve High Bandwidth (Throughput)

While latency focuses on how fast each request is, bandwidth is about how much you can handle overall.

In simpler words, bandwidth is the amount of data your system can process within a given time. And when you’re scraping at scale, this becomes just as important as speed.

Here’s how you can increase it effectively:

Parallel Requests at Scale

Concurrency is just the starting point. To truly improve throughput, you need to scale parallel requests while staying within safe limits.

Sending more requests simultaneously increases output, but it needs to be controlled to avoid server blocks or failures.

Distributed Scraping

Instead of relying on a single machine, distributed scraping spreads the workload across multiple systems or cloud instances.

This allows you to handle significantly larger volumes of data without overwhelming a single pipeline.

Efficient Data Pipelines

How you process data matters just as much as how you collect it.

Streaming data instead of bathing it can reduce delays and prevent any hinderances. By processing data continuously, you keep your pipeline moving smoothly and efficiently.

Proxy Rotation for Scale

As you scale requests, distributing traffic becomes essential.

High-bandwidth scraping depends on sending requests from multiple IP addresses to avoid detection and throttling. Proxy networks like Decodo enable this kind of distribution, allowing you to increase bandwidth without triggering blocks.

Latency vs Bandwidth: Finding the Balance

Most scraping systems fail to balance the two effectively. In reality, pushing too hard at either can backfire.

- Too many requests: triggers blocks, rate limits, and retries, because of which the latency increases

- Too few requests: leaves resources idle, which leads to an underutlized system

What Does Balance Look Like?

You need a more controlled and adaptive approach:

Rate Limiting

Sending requests at a controlled pace helps avoid detection and reduces the chances of being throttled or blocked.

Adaptive Concurrency

Instead of using a fixed number of concurrent requests, adaptive systems adjust based on server response, load, and success rates.

Intelligent Routing

Directing requests through the most efficient paths, whether via proxies or optimized networks, helps maintain both speed and reliability.

Note: Maximum speed isn’t about sending more requests. It’s about sending the right number of requests, in the most efficient way possible.

When latency and bandwidth are balanced correctly, your scraping system becomes not just faster, but smarter and more resilient.

The Role of Proxies in Speed Optimization

Proxies, in particular, play a much bigger role than just enabling access. They directly impact:

- Latency: how quickly your requests are routed and responded to

- Success Rate: how often your requests go through without failure

- Bandwidth: how much data you can process at scale

What Happens with Poor Proxy Setup?

Using low-quality or poorly configured proxies can slow down your entire pipeline:

- Slower response times

- Higher failure rates

- More retries, which increase overall latency

What High-Quality Proxy Infrastructure Looks Like

On the other hand, well-optimized proxy systems actively improve performance:

- Faster and more efficient connections

- Stable sessions with fewer interruptions

- Reduced chances of blocks and rate limits

Why It Matters at Scale

As your scraping operations grow, these differences become more noticeable. Small inefficiencies at the proxy level can compound quickly, affecting both speed and cost.

Modern proxy solutions like Decodo are designed with performance in mind, combining intelligent rotation, optimized routing, and high reliability to improve both latency and bandwidth in real-world scraping pipelines.

Practical Optimization Checklist

| Optimization Area | What to Do |

| Concurrency | Use async scraping to handle multiple requests simultaneously |

| Request Handling | Avoid browser automation unless absolutely necessary |

| Resource Optimization | Block images, fonts, and scripts to reduce payload size |

| Data Transfer | Enable compression (gzip/brotli) to speed up responses |

| Scaling | Increase concurrency carefully to maximize throughput |

| Performance Monitoring | Track success rates and response times regularly |

| Proxy Infrastructure | Use reliable, high-quality proxies for better speed and stability |

Low latency and high bandwidth don’t come from one trick. They come from building the right system. A system that combines:

- Better architecture

- Smarter use of concurrency

- Efficient and reliable infrastructure

Each layer plays a role, and when they work together, the results compound. You get faster requests, higher bandwidth, and a more stable pipeline overall.

If you’d like to explore proxies in more detail, check out our other in-depth guides on the topic:

- How to Scrape Websites Without Getting Blocked

- Avoid IP Blocking in Web Scraping with IP Rotation

- Google Reviews Scraping Made Easy: Everything You Need to Know

FAQs

Async scraping can be implemented using libraries like aiohttp or httpx in Python. These allow you to send multiple requests concurrently without waiting for each one to finish, making your scraping pipeline much faster and more efficient.

There’s no fixed number. It depends on the target website, your infrastructure, and proxy setup. A good approach is to start low (e.g., 5-10 requests) and gradually increase while monitoring response times and block rates.

It depends on your use case. Datacenter proxies are faster and cost-effective for large-scale tasks, while residential proxies offer higher success rates and are better for websites with strict anti-bot measures.

A good scraping pipeline typically aims for a 90-99% success rate. Anything significantly lower may indicate issues with proxies, request patterns, or blocking.

Start by measuring key metrics like response time, request failures, and throughput. Identify whether delays are caused by network latency, blocking, or inefficient code, and optimize each layer accordingly.

Disclosure – This post contains some sponsored links and some affiliate links, and we may earn a commission when you click on the links at no additional cost to you.